Artificial Intelligence unlocks the value of digital assets

In China, more than 280 million people watched the game live, marvelling at a machine’s strategic mastery over man. However, not all AI developments are winners. On the flip side of AlphaGo’s triumph, was Tay, the chatbot released by Microsoft that was trained to respond like a millennial. In short time, its vulnerabilities were exposed and Tay, mimicking users, transformed into a racist, xenophobic, misogynistic chatterbox.

Whoops.

Nonetheless, AI is rapidly on the rise with mind-boggling potential, from fighting cancer and creating original art to seeing for the blind. And AI is advancing in tandem with a precipitous shift on the web, which is moving more and more from text to images.

Each day, two billion photos are shared online, keeping pace with the surge toward visually driven apps such as Snapchat and Instagram. Bringing AI together with a glut of photos has driven image recognition technology into high gear, yielding great gains for retailers, advertisers and consumers, but most importantly, publishers.

The ABCs of AI

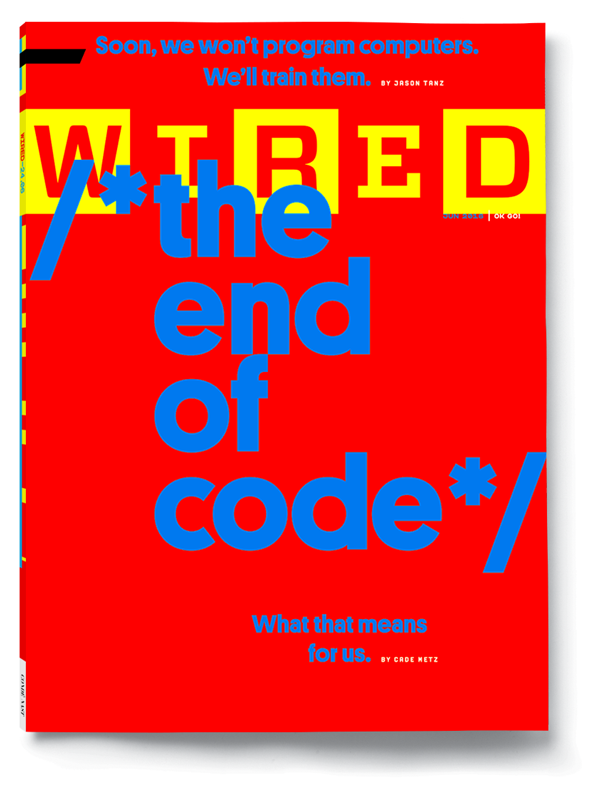

Images. Images. Images. They are the foundational building blocks buttressing AI development of ever more powerful image recognition technology, and it’s near relative, face recognition technology. A recent issue of Wired offered the 30,000-foot view by taking a deep dive into machine learning, the foundation of AI. This approach, which relies on “training” a computer rather than coding, is now far more powerful thanks to “deep neural networks.”

These are massively distributed computational systems that mimic the multilayered connections of neurones in the brain. As a simple example, to train a computer to recognise a photo of an elephant, developers “show” it thousands of photos, including many with elephants. The more images, the better the computer learns. Take this process to its next logical steps, and billions of images down the road, behold the birth of image recognition technology.

Image recognition technology has moved rapidly ahead. Today, it can correctly analyse objects, faces, places, colours, logos and more. This also means that any company with access to large collections of photos wields a new knowledge gathering superpower: data mining. It’s a competitive edge, leapfrogging previous information gathering and marketing research tactics, and with the capacity to drill down to a granular level of user insights, image recognition technology is predicted to be a $30 billion market by 2020.

AI-empowered fashionistas

In the retail space, Macy’s set the pace in 2014 with the launch of an iOS app allowing shoppers to upload a photo, find an equivalent product on Macys.com and purchase it immediately. The result: instant gratification for shoppers and a doubling of mobile sales in fiscal 2015 for Macy’s.

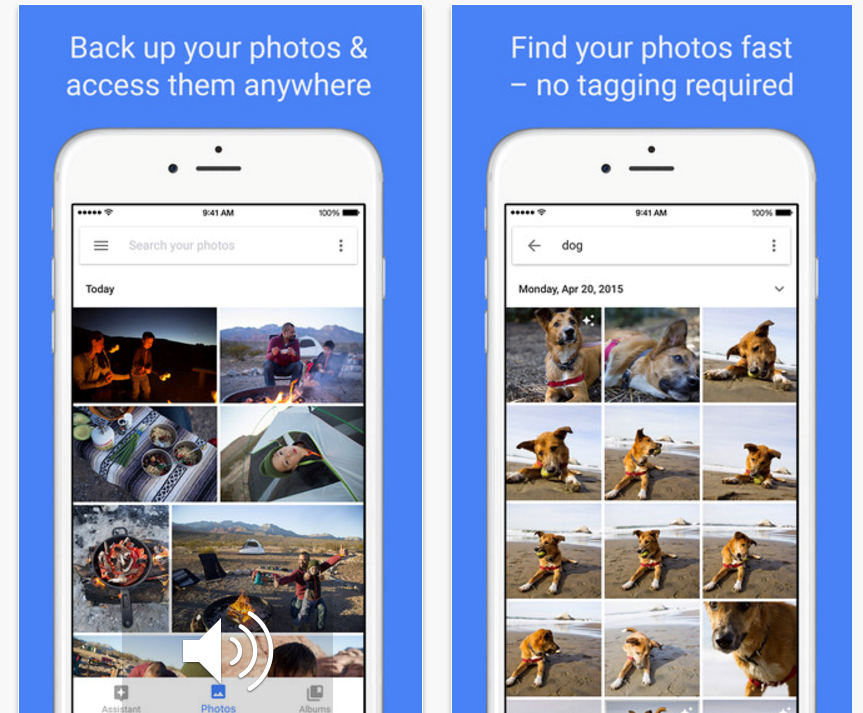

Google, not surprisingly, moved in quickly with the Google Photos app, which hit 100 million users in its first five months. It allows users to store, organise, catalogue and search their images. However, just as there’s no such thing as a free lunch, there is a lucrative strategy behind Google’s free cloud-based app. Google has effectively deployed armies of users, who are rebelling and reorganising their images, to provide a vast dataset. This allows Google to hone and re-hone algorithms and thereby dramatically improve visual searches and expand services.

So where’s the DAM value?

As the buzz around image recognition technology grows louder, we look to digital asset management (DAM), which is the storing, managing and sharing of images, videos, logos and ads. DAM was once relegated to organising and archiving the massive photo collections of large newspapers. Today it’s a must for all media outlets. Moreover, the exponential developments in AI are trickling down to give DAM greater potential than ever.

As a starting point, there is the matter of just clearing obstacles. “I have seen many clients with gigabytes of assets wonder whether to go through the tremendous effort of manually assigning metadata to them,” wrote Martin Jacobs, Group Vice President, Data Driven Marketing Technology at Razorfish.

Few will argue it’s not worth it. And there is little excuse, now that it’s automated. Yet the benefits of a DAM strategy empowered by image recognition technology goes beyond salvaging resources and saving time.

“It’s all about unlocking more value in your assets,” said Chris Carr, MerlinX Development Manager at MerlinOne, a DAM vendor. “An asset has no value if you can’t find it or if you don’t know if you have permission to use it.”

Effective asset management, Carr added, is dependent on metadata, which is traditionally provided by content creators and maintainers, such as photographers, writers, videographers or dedicated librarians. Yet against competing priorities applying meta tags is a loathsome, Herculean task that can easily fall by the wayside. “AI feature recognition fills a gap for cases where no metadata exists and no human is editing metadata,” he explained.

Watch this space

Image recognition continues to achieve impressive new milestones and DAM will evolve accordingly. Example advances are expected to include the capacity to identify foreground and background colour information, reporting on the usage of assets across the web and how likely the image is to be memorable to humans. The technology also holds great promise for strengthening content more broadly and publishers can take advantage of this to drive commerce to their site. Last year, for example, the BBC used facial recognition to measure if native ads work. The study revealed that emotional involvement with a native campaign was heightened when brand labelling was made clear.

If looking at the bottom line, while these AI-driven innovations may be subtle compared to the highly celebrated, but yet-to-be-mainstreamed, driverless cars and personal robotics, they are here today, proving their value and their worth for publishers.

More like this