Duke Reporters’ Lab on using AI to fight fake news

It is perhaps an ironic twist of fate that one perceived threat to society may end up saving it from another, but that is exactly the concept that Mark Stencel and his team at Duke University are exploring. The Duke University Reporters’ Lab studies the impact of political fact-checking in journalism around the world and coordinates research on new technologies that automate and accelerate the fact-checkers’ reporting process. In other words, AI could end up being what saves us from fake news.

“Technology could do so much to improve the reporting process,”, says Mark Stencel, co-director of The Reporter’s Lab. “As well as and the process by which journalists disseminate their work. But it’s so rare that newsrooms actually get that technology and use it in a way that makes that kind of impact.”

“So we’ve been working with some of the political fact checkers in the US and around the world, to uncover some clues and hints as to how that could be done, in a way that’s more realistic for news organisations. Small and medium news organisations just have a hell of a time figuring out how to make this stuff work – they’re so busy, they’re so cash strapped, they don’t always have the humans they need to do this kind of work, but these are problems that really can be conquered.”

Conquerable maybe, but with so much fake news now seemingly out there, the question of where to start springs to mind. Stencel says that we now have two distinct forms of fake news doing the rounds in our day-to-day lives: traditional and official. So, there are the sensationalised stories that have always emanated from the tabloid press, and now – more worryingly – deliberately disseminated misinformation, often from political sources. The latter is much more difficult to fight.

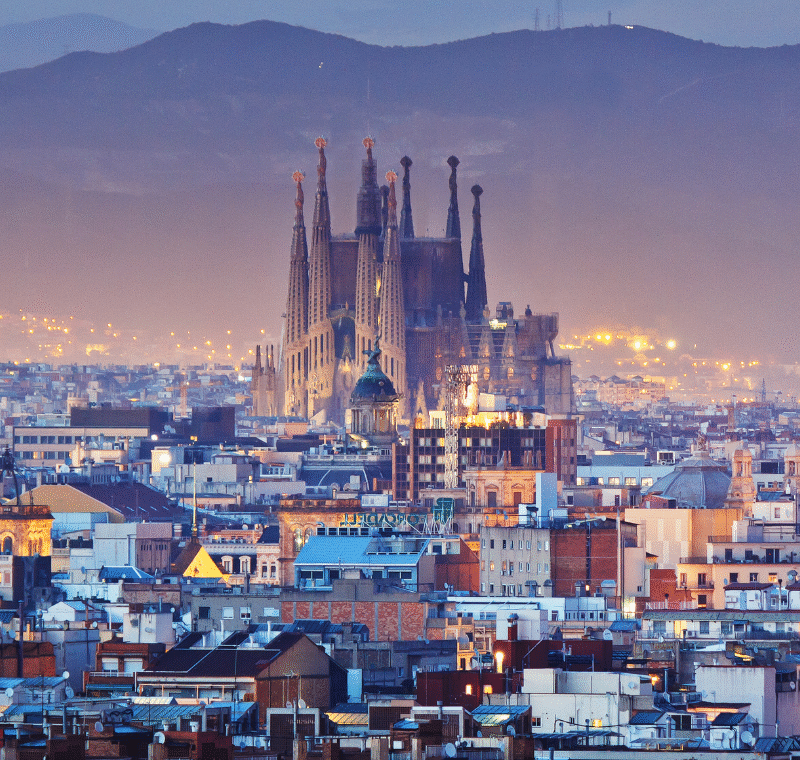

Fact-checking sites around the world (reporterslab.org)

“There are really two kinds of fact checking that are going on out there. There’s the fact checking of what I think of as unofficial misinformation (the stuff that people call fake news). That’s often about verifying hoaxes and things like that and it’s a very widespread problem. People like Snopes have been fighting it long before any of us ever heard that here was such a thing as fake news. It’s a big problem, I’m not sure in reality if it’s so much bigger a problem than it was when people could see trashy headlines in grocery store checkout lines, that also had a big impact and a big reach. I think we’ve always been competing with that kind of misinformation. It’s a problem, people are doing smart things to try to combat it.”

“And then the other kind of fact checking focusses on official misinformation. So this is when politicians, prominent people in society, prominent organisations, political parties, companies make statements that are known to be false and inaccurate. Fact checkers do a different kind of fact checking to verify those claims, because they’re not always as easy as ‘is that photo made up or not?’ It ends up being more about, ‘Are those numbers exactly right? Is that misleading? Is it really going up and down?’ It involves a lot of really complicated reporting. Both forms are complicated, they’re different problems, technology can help solve both.”

Official fake news is not just difficult to fight from a physical point of view. The battle for hearts and minds has become an increasingly important issue for the media in recent years. As Jim Edwards, editor-in-chief for Business Insider UK told FIPP at the beginning of the year: “It’s not simply that some people can’t tell the difference between real news and fake news. Rather, some people want fake news. They want ‘alternative facts’.”

This represents a worrying trend in our society – flames that have in part been fanned by the rise of social media in recent years – in that opinion seems to be being given just as much grace as solid facts. How then, can a media industry that has built its very existence on the fair and accurate presentation of truth throughout the years, convince hardliners that the fabrications spouted by their political candidates are nothing more than lies?

“Right, I mean it’s kind of a polite dinner conversation effect as in how do you tell somebody that someone they like is a lying liar? So this is always hard. And whether it’s Donald Trump, or Hilary Clinton, or any number of politicians across Europe and around the world. People have a strong affinity for the political leaders they support, so correcting misinformation about them with their key supporters is often very hard. We are looking at ways to present that information more effectively to audiences that may not be receptive to certain fact checkers findings.”

“That doesn’t mean you pull your punches. It may mean the way you write a headline, it may be how you present the conclusion. Should the conclusion be at the top of the story? ‘This is wrong and here’s why?’ Or should the conclusion be at the bottom if the story? As like, ‘Well we’ve walked you through the evidence and in the end that just leads us to the fact that this was wrong’. And there’s a lot of different ways at it, a lot of user testing a lot of experimentation will help us figure out what works best.”

If we look specifically at the issue of Donald Trump in the US, who was recently forced to issue a correction on his own testimony that he couldn’t see why it ‘would’ be Russia who meddled in the US Election (apparently he meant ‘wouldn’t’), is work being done to engage with and inform these political demographics?

“Yeah PolitiFact has actually got a grant to go into very red state parts of the United States to try to cultivate a different kind of relationship and see if there was a way to explain what they were doing in a way. That was an interesting bit of R&D on their part. The trust in the media issue is really interesting because it’s so tied to party. On the one hand in the US you have a part of the electorate that generally supports the press and a part of the electorate that doesn’t. But when another party was in control of Washington, even that segment of the public didn’t like the press as much. And the segment that usually doesn’t like the press, liked them a little bit more. So it’s a very partisan thing.”

“I don’t know that AI can overcome the viral primal, tribal nature of politics in the United States or everywhere else for that matter. Trust in the media has got to be built one story at a time and one organisation at a time. I think this technology ultimately helps with it, but it’s a bigger problem than a bit of code is going to be able to fix.”

See Mark’s presentation at the Digital Innovators’ Summit (DIS) 2018. Read the DIS2018 Special Report.

Finally, artificial intelligence itself has caused concern within the industry in recent years, with those believing that robots are coming to ‘take our jobs’. We asked Stencel if this view was in any way congruent with the realities of AI in media that he is seeing on the ground, and if artificial intelligence was indeed the enemy that is has so far been painted to be in certain circles.

“Well I mean, all technology has potentially scary applications, e.g. the idea that we’ll simply deploy AIs to write stories, and then fire all the journalists, because we’ll have AIs to do it. That’s a scary scenario, but I don’t think that’s going to happen.”

“What I think is the more useful application of that kind of technology in newsrooms is the kind of thing we’re doing, which is if I am a team of 2-3 fact checkers at the Washington Post, and I have a lot of things that I could possibly write stories about on any given time day or week. Is there a bit of code that can go and sift through thousands and thousands of pages of transcripts every day for me, and flag certain statements as being potentially of interest? Then I’m looking at 10 or 15 or 20 most likely targets, that’s something we’ve built and deployed and is already making fact checkers more efficient. They’re writing stories based on statements they find that way.”

“What’s interesting is, sometimes it’s not even the statement that they find that way that they end up fact checking. It becomes a tip that says ‘Huh. Where did that claim come from?’ And when they investigate it further they eventually realise that it’s a talking point being used by these people, and here’s how it’s been used in multiple places, and it becomes a bigger story. And all because a little robot built by a bunch of college students, pointed out the right line and the right transcript to them.”

It is a hugely interesting and important research project that is currently being undertaken by Duke Reporters’ Lab, as the battle against fake news becomes more and more consuming, particularly for news publications. This also represents a more practical and realistic exploration of AI in the media industry than we have been used to seeing in recent times. As we begin to gain a more pragmatic insight into what artificial intelligence can do for the benefit of media and its audiences in the near future, an interesting by-product of this research may well prove to be the understanding that AI is not quite the danger that some have made it out to be, particularly compared with some of the more vehement human spreaders of fake news in recent years.

Get stories like these straight into your inbox every week. Subscribe to our (free) FIPP World newsletter.

More like this

Download the DIS 2018 speaker presentations

Reimagining media: National Journal on pivoting to research and consultancy streams

OK Google: how publishers can create voice assistant content

[Video] ALT.dk on the value of SEO in publishing

How the Rezonence model is making publishing pay

Trustworthy Accountability Group (TAG) on tackling criminal activity in digital advertising